Back in September 2015 ITC Luxury Travel, a client of over 5 years told us about an opportunity they had with the BBC to make a documentary about the world of luxury travel.

A year later, after months of filming in various exotic locations (and Chester) the behind the scenes style documentary ended up being named "The Millionaires' Holiday Club".

It provided an entertaining, jealously inducing glimpse into some of ITCs clients amazing holidays along with the work and passion that goes into making them happen behind the scenes.

You can watch the trailer below…

My initial comment to Jen Atkinson (CEO @ ITC) was something along the lines of "wow, now that’s going to be some amazing free PR! Please tell me you’re doing this!?".

My next thought was although this was a TV documentary we needed to prepare for it digitally. With smartphones & tablets being the norm we now live in a world of "second screen TV viewing".

The primary challenge was in the event of a massive web traffic spike how do we make sure the website not only stays online but also provides the same fast browsing experience visitors expect.

Secondary was looking at how to maximise the opportunity. I will touch on a few things we did in this area but the focus of this post is more around what happens to web traffic and some tips on how to prepare.

So, how much traffic are we going to get?

This was the million dollar question and honestly, we never found the exact answer until the night of the shows.

We started like any tech firm – researching online. Unfortunately most of the information we found was around when websites crashed due to TV related traffic spikes (e.g. companies pitching on shows like BBC Dragons Den).

Although ITC's brand would appear in the background and be mentioned, it wasn’t going to be done in an overtly commercial way, this was the BBC after all.

That said, we weren’t simply showing a 30 second TV ad. We had two, one hour prime-time Friday night BBC Two slots (9pm) to plan for. In addition, we would have to consider on demand, in the form of BBC iPlayer, repeats and different show times in Scotland.

With little insight into how all the footage would eventually take shape into a show it was a tricky one to predict!

Estimating Traffic

We started by looking at tools and data for viewing figures to inform our best guess scenarios. You can get basic free info from sites like www.barb.co.uk. We also tapped into communities that literally forecast viewing figures for upcoming shows (and they are pretty accurate).

This gave us some estimated TV viewing figures. We then ran scenarios on percentages of those viewers taking time to Google the ITC brand, find the site and interact. Google analytics data gave us some insight into typical numbers of pages viewed per session and over how long so we could then get some headline numbers of expected page views...except...it wasn’t that simple.

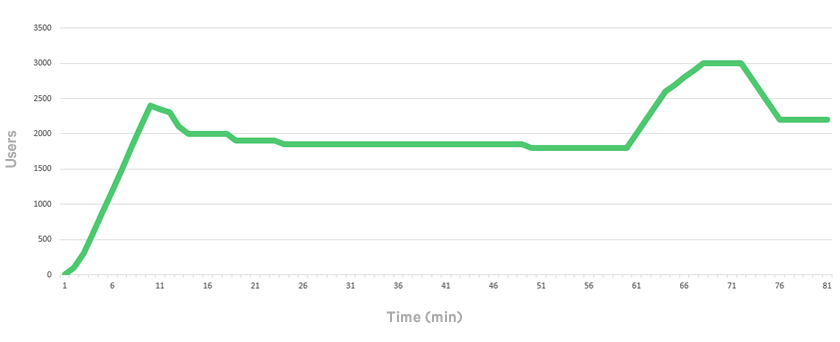

We also had to factor in and model how the traffic would flow – would it all come in one lump at the start of the show or more evenly throughout? Ultimately the metric you need to prepare for is the concurrent users / requests per second.

Our estimation was traffic would build up at the beginning of the show quite heavily, then even out / drop off a bit before another surge at the end of the show and this is pretty much exactly what happened.

What surprised us was how many hours after the show we maintained a high concurrent visitor level. Even six months later the natural organic traffic is significantly higher due to all the backlinks / authority we accumulated as a result.

Below is a short video showing how traffic ramped up on the night (sadly we don’t have footage of the whole thing – come on, it was kind of busy night!).

On the nights of the shows we found the mobile / tablet based traffic accounted for around 80% of visitors.

What did we do to prepare?

Once we had some scenarios the first thing we implemented were a series of Load Tests to baseline what level of traffic we could cope with.

The numbers we were aiming for were huge compared to normal traffic levels (even peak session / January sale levels) and we quite quickly reached our limits which were a long way from where we wanted them to be.

There are various tools out there that can help to script and simulate website traffic. We used a combination of excellent tools like Load Impact and New Relic to give us insight into how the tests were performing with real-time data on how it was impacting our servers and infrastructure (e.g. bandwidth, CPU, disk IO, memory).

We involved our trusted hosting partner UKFast very early in the work, making them aware of the show, our tests and working with them to upgrade and prepare our infrastructure. I can’t stress how valuable it is to have an experienced and engaged hosting partner. As always the team at UKFast pulled out all of the stops to help service our evolving requests.

Problem 1

The first bottleneck we encountered was actually bandwidth related. With the website being quite media / graphic heavy we found that at moderate levels of traffic we started to overwhelm our firewalls throughput before anything else. The setup was changed / upgraded by the guys at UKFast giving us nearly 10x the throughput. We also shipped nearly all website assets (images, video, CSS, JavaScript) off to one of our CDN (Content Delivery Network) providers.

This gave us huge theoretical boosts to the amount of pages we could serve per second.

Problem 2

Behind the scenes the ITC website is actually a fairly complex system, with large databases of content and technical integrations for things like online pricing / offers.

Our next challenges were more around the processing power needed to deliver this. Things like load balancing can help (splitting traffic across multiple servers) but we also invested a lot of time in the world of “caching”.

With complex dynamic websites every time you request a page, webservers have to execute code that queries databases, processes data and ultimately stitches everything together into a rendered output in the form of the webpage that you see. This can be “resource expensive” so efficient code is critical and it’s good practise to cache wherever possible.

In simple terms, caching is about letting the server do that work once then storing a copy of the resulting output and serving that from cache as much as possible (cache hit rate). This can improve the amount of requests per second that can be handled by hundreds if not thousands of times.

We tested various website “accelerators” like varnish that can help to facilitate this. However, with complex sites sometimes not everything can be cached so we developed our own cache systems using strategies such as donut caching.

After lots of work and testing we were able to prove we could serve in excess of 100k pages a minute whilst still keeping average page load times at around 1.42 seconds.

Even with this confidence we still had to devise a catch all plan B. This was in the form of a simple static site that would be served in the event of a meltdown (thankfully it was never needed!).

Tips summary

- Plan ahead

- Test, Test & Test!

- Use Caching / Web accelerators

- Place site assets on a CDN

- Identify bottlenecks / weak points

- Work with your hosting partners

- Review security / PEN test

- Optimise for mobile & tablet

- Look for opportunities in advance (PPC campaigns, SEO landing pages, competitions, offers, social etc.)

- Have a backup / disaster recovery plan!

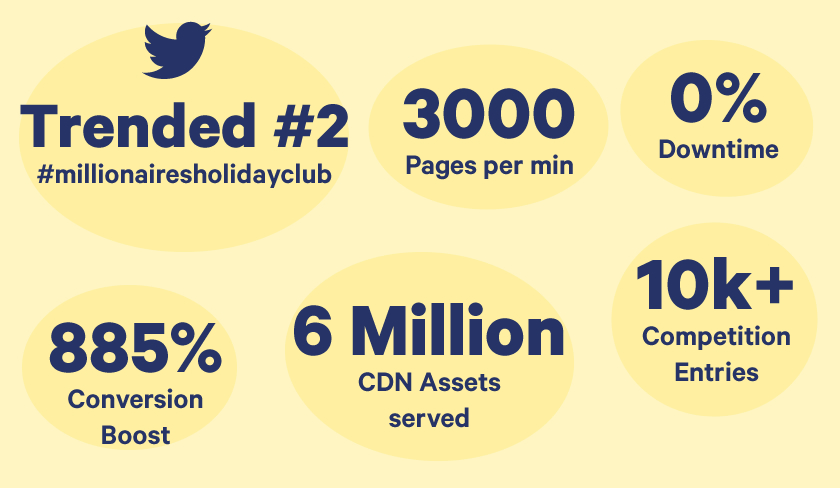

So what happened on the nights of the shows?

Maximising the opportunity

In addition to the work done to ensure we could handle the traffic spike we also did a lot of work in the build up to maximise the opportunity and exposure.

Some of the work included

- Setting up competition / offers

- Creating "alert bars" across the site to highlight TV show / offers

- Social media / hash tag strategy

- Recruitment drive

- SEO / PPC Landing pages around the show’s title – making sure we would rank for people searching for the show title instead of brand

- Taking advantage of opportunities to build authoritative backlinks